Insights sharing what

we know

We'd like to share some of our insights with you. These are based on our experience over many years.

We present these, summarised for your convenience; however each one is based on detailed evidence and research, supported by multiple data-points, and by our own experience.

Contents

- Use Open Source

- Blockchain is a Distraction

- Spreadsheets Considered Harmful

- ChatGPT and Artificial Intelligence

- Hydrogen is Not the Answer

If any of these insights spark your interest, please do get in touch and we can tell you more.

We will keep adding to, and updating these articles, so please check back from time-to-time.

Use Open Source

Open Source is a key not-so-secret weapon in how we do things. It was also one of the key factors enabling our company to operate at lower cost and with much greater agility.

At Telos Digital, this is particularly critical, both a part of our strategic advice, and as a way in which either we, or our customers, can build/modify systems without the huge expense of starting everything from scratch, and with far greater privacy/security and control of our own destiny.

While Open Source now dominates much of the world of technology, it's surprising how many (large) companies haven't yet caught-on!

Blockchain is a Distraction

Blockchain is a solution in search of a problem. In every case we've looked at, "technology x with blockchain" is less good than the corresponding "technology x without blockchain". We'll explain why it's all hype, or "an amazing solution for almost nothing".

There are only 3 exceptions to this rule:

- Bitcoin – which has some limited use as a highly volatile, potentially pseudonymised, currency,

- NFTs – which are primarily "useful" for money-laundering, and

- Physical blockchain – a chain, secured to a concrete block, used for preventing bicycle theft!

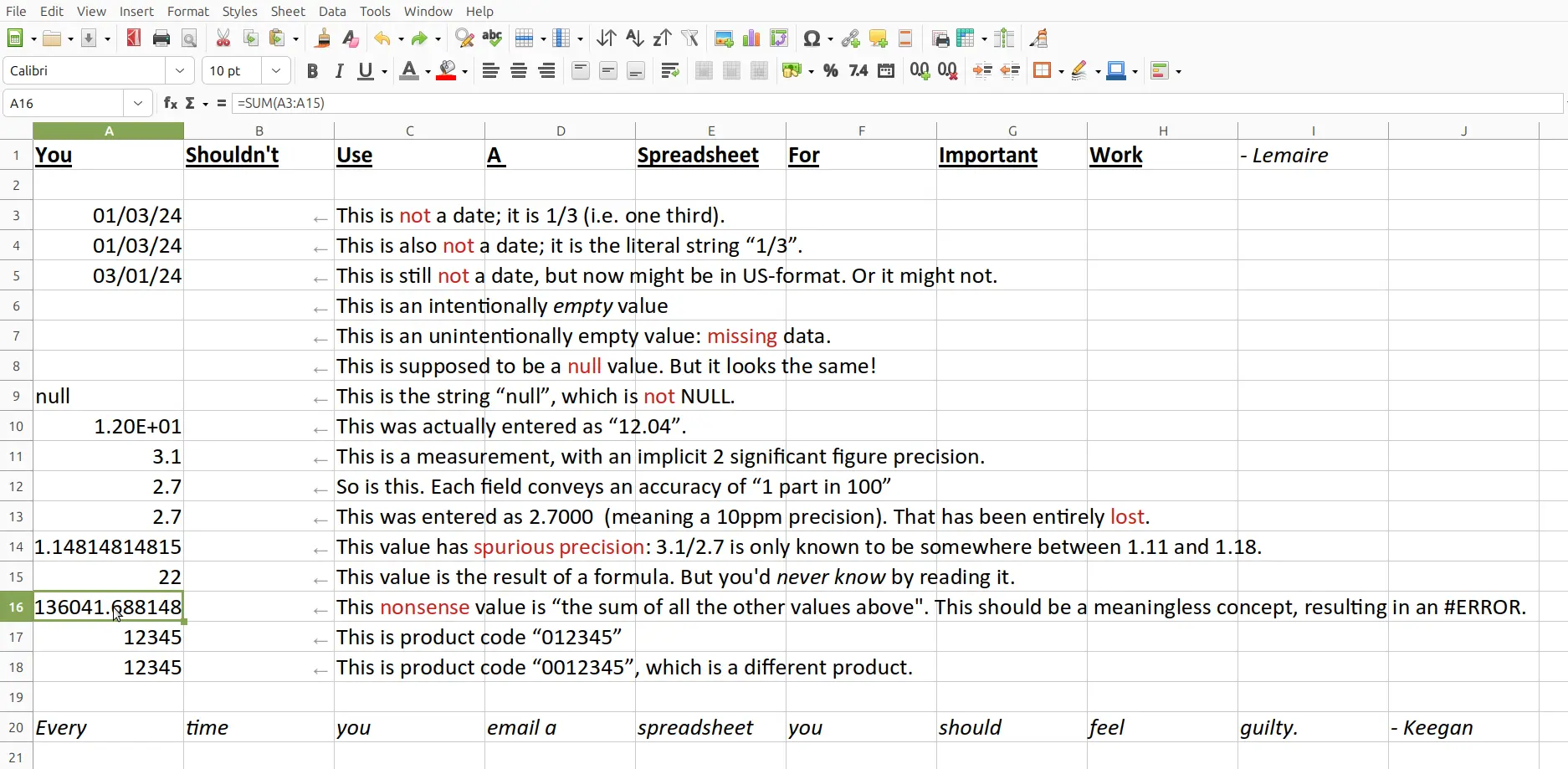

Spreadsheets Considered Harmful

While spreadsheets make simple tasks very easy, they make moderately-complex tasks much harder than they should be, leading to a high probability of undetected errors. Because spreadsheets are so common, these errors can sometimes have severe and wide-ranging consequences. So, excepting for the simplest of tasks, the spreadsheet is the wrong tool for every job.

Spreadsheets are good for a few things though:

- Xmas card lists – simple lists of low-criticality information.

- Quick and dirty models – one-off temporary things, not intended to be shared.

- Crashing the economy – UK austerity was based on a spreadsheet error.

ChatGPT and Artificial Intelligence

Artificial intelligence is not the future, it's the present. It's up to us to ensure that its power is wielded for the betterment of humanity, rather than its detriment.

Chat GPT is a neural-network model, trained on much of the sum of human-knowledge, with a human-friendly, easy-to-use chat interface. Try it, free, at chat.openAI. This technology has potential for huge productivity improvement, and competency-enhancement, in the same way that the Internet, Wikipedia, and the industrial revolution radically changed the way humans learn, think, and work.

It isn't (yet) a true artificial intelligence, but, when interacting with it, it often feels like one. Large language models, LLMs, based on the generative pre-trained transformer, GPT, method, promise massive benefits to humanity, as well as some scary consequences, as shown in this overview. However we feel about this, we all need to learn about it, or be out-competed by those that do.

Hydrogen is Not the Answer

On the face of it, hydrogen is "the king of fuels", with a high energy-density (per kg), and with zero carbon dioxide emissions.

In practice, it's not that simple. Storage problems and volumetric energy-density rule out most applications, while compatibility issues means that the natural-gas grid could never safely transition. Using hydrogen is also less energy-efficient than other zero-carbon alternatives.

The key problems for hydrogen, as a fuel, are:

- Volumetric energy density — compressed, or even liquid hydrogen takes up a huge volume, for a given amount of energy.

- Embrittlement and leakage — hydrogen damages pipework, needing special materials to prevent leaks.

- Hydrogen source — there are few natural sources of hydrogen, and if we make it from methane, the CO2 emissions are just elsewhere.

We originally set out to write an article about how hydrogen was the solution… but the data entirely changed our point of view.

![Hydrogen (supernova), emission spectrum, and a methane molecule.

[Methane is the most common source of H2].

Click to read full article. Hydrogen (supernova), emission spectrum, and a methane molecule.

[Methane is the most common source of H2].](refract.php?insight=hydrogen.webp)